When you first created your website, you may have thought that all you had to do was organize a few pages, include text and images, and throw in some links and buttons to your contact pages. Sounds easy, right?

If only it were that simple. The truth is, creating a website that generates leads and brings new customers to your business is an ongoing process. If you want to make your site as effective and efficient as possible, that means figuring out what your potential customers respond best to. So how can you do that?

That’s where A/B testing comes in handy – and if you’re not sure what that is, you’re in the right place. This comprehensive guide will help you start testing (and improving) your site today.

An introduction to A/B testing

If you’re reading this guide, chances are that you’re curious about A/B testing. You probably know that it has the potential to improve your website conversions, and as a result, help your business. But if you’re like many business owners and marketing managers, you may not know much more than that.

Let’s start by taking a minute to look at exactly what an A/B test is and how it works.

Watch the video below (and then keep reading) for more A/B testing knowledge.

How an A/B test works

If you remember lab sessions from your high school days, each experiment you did likely had a “control” group and a “test” group. Your control group remained the same during the experiment, while your test group was exposed to something to produce results.

A/B testing also involves control and test groups. But in this case, it’s your website that is subjected to testing, and the control and test groups are your visitors.

If you wanted to test one specific element on your website (like a button or form), the element as it currently exists would be shown to a control group, labeled “A.” A new version of the element would be shown to the test group, labeled “B.”

You’ll also select an A/B test sample size, which is the percentage of your total audience you will test the new element on.

![]()

At the end of the test, the results are compared to see which group of visitors reacted better to the element. If the A group had a better reaction, the element should remain the same. If the B group had a better reaction, the element should be changed to match.

What defines a better “reaction”? It’s usually conversions – that is, the number of people who make a purchase, fill out a form, or perform some kind of other desired action encouraged by your site.

Why should I A/B test?

No website is perfect. There is always room for improvement, and even with extensive knowledge of your target audience, it’s impossible to predict what they’ll respond best to without testing.

You may assume that your customers and leads respond best to a blue button, only to find out that a green one gets much more attention. Or you may think that placing product copy on the side of a page boosts conversions, but putting it beneath the photos is what gets people clicking.

Without A/B testing, you’ll never know if there’s something minor killing your conversions. But by running and monitoring various tests, you’ll be able to unlock hidden earning potential in your website – increasing your leads and revenue bit by bit.

What you’ll need to get started

To get started with A/B testing, you’ll need the following:

- A website that you have full access to (via CMS, FTP, etc.)

- A/B testing software – we’ll cover a few options shortly

- A list of the elements you want to test

- Patience – because the best test results typically take 30+ days

Once you’ve prepared everything, you’re ready to kick off your A/B testing!

Let’s now examine the specific elements you’ll want to consider testing on your site. If you’re not sure what to test first, this should help!

What should you test?

As we mentioned previously, most A/B tests focus on elements that play some kind of role in conversions. This is why A/B testing is considered a major aspect of conversion rate optimization (or CRO) – that is, the practice of improving a website to boost conversions.

This doesn’t just limit you to changing the size and color of your “add to cart” button or the appearance of your “contact us” form, however. Let’s explore some of the elements on your site that might be perfect for testing.

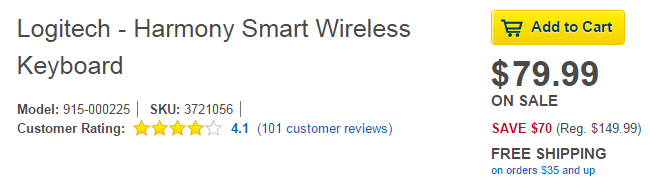

Buttons

If you use buttons anywhere on your site, these are an easy testing element to start with. Try changing the color, size, or even shape of your buttons to see if visitors respond any differently.

You may not expect switching the color of an “add to cart” button to have any noticeable effect on your conversions, but several tests have demonstrated that color can have a big impact on a visitor’s decision to click. In fact, this test detailed by HubSpot shows that a change from green to red boosted conversions for one site by 21%!

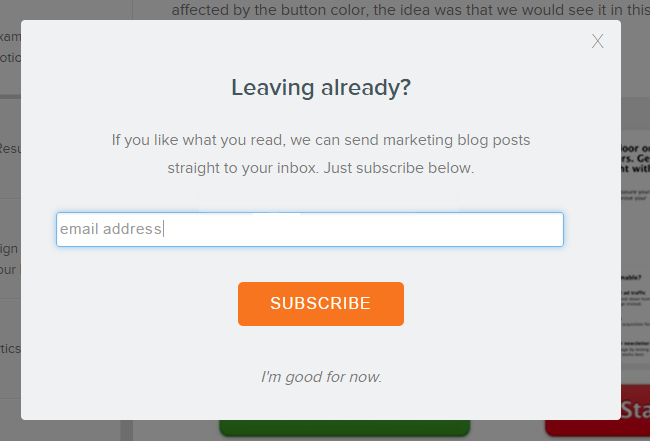

Calls to action

Along with calls to action that take the form of buttons, you should also think about testing text or link calls to action (CTAs). Using stronger or more targeted language can motivate an increased number of visitors to take the action you desire.

For example, if you end a page of content with a link to an additional page full of related information, you might test changing the color of the link, using a slightly different font, making the link longer, or just changing your wording.

Also, if you have a very important call to action, you might try testing it in a pop-up instead of simply placing it on the page. Pop-ups can be highly effective, but you’ll need to be careful to make sure they don’t drive conversions down by pushing your visitors away!

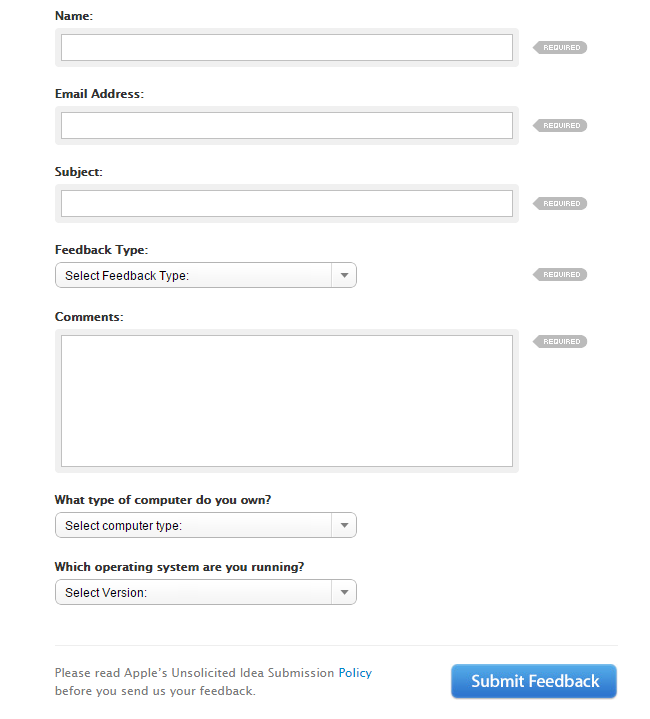

Forms

If your website collects leads with a contact form, there’s a lot riding on that one form. Consider testing its length, number of fields, or appearance to see if you can collect more information.

One important thing to consider with forms: sometimes, you can drive away potential leads simply by asking for too much information. You may want to test reducing the number of fields to a minimum, or adding clearer labels to indicate what is optional vs. required.

In addition to contact forms, any form on your site can be tested as well. For example, if you run an ecommerce website, you can try a one-page checkout vs. a multi-page process to see what your visitors prefer. You can also test different labels on your checkout forms, pre-filling fields, and so on.

Locations

A little earlier, we gave the example of product copy being placed on the side of a page instead of below the product images. This is one example of a location-based test. Maybe a button on your page would perform better in a different position on your page, or a link would receive more clicks in a different place.

You can also test the locations of elements like signup forms for your email list, or anything else that may be linked to conversions. It’s possible that an email CTA doesn’t have much effect at the top of your website, but converts extremely well at the bottom, where visitors arrive after reading your content.

Visual elements

If you can change it, you can test it. The visual elements of your website that you should test include colors, fonts, layout, spacing, special effects, and much more.

An easy test would be to change the color of your site’s background. If you use a dark background, try testing a lighter one to see if visitors think the B version is easier on their eyes – and worth browsing a little longer.

Other ideas

What else can you test? The sky’s the limit, as long as it’s an element you can change, and that you can effectively measure the results for after a set period of time has passed.

If your company has an ecommerce site, you may even consider doing A/B tests for your pricing. This may involve showing pricing packages differently, or even reducing the costs of some plans for a test group to see if more of them show interest.

A/B Testing Software

Now that you know why you should run A/B tests and have a few ideas for elements to test, you’re probably wondering how to get started.

There are a number of A/B testing software options that allow you to set up, monitor, and review tests on your website. Some of them are free, while others have a monthly fee. What you pay depends largely on the included features of each program.

Let’s take a look at some of the most popular A/B testing options, starting with Google’s own Content Experiments program.

Google Analytics Content Experiments

Google Analytics Content Experiments are a good way to start if you have access to Google Analytics. With Content Experiments, you can send random samples of your visitors to different versions of your pages.

Content Experiments are a little different than other A/B tests in that they allow you to test more than just an A and B group. You can actually test up to 10 versions of the same page at one time, each with different elements.

To set up a Content Experiment:

- In Google Analytics, click “Behavior,” then “Experiments.”

- Click the “Create Experiment” button.

- Select what you want to test (in the form of a goal), the amount of time you want the test to run, and the percentage of site traffic you want to experiment with.

- Add the URLs of the pages you want to test against each other.

- Place the provided code in the correct location on your website (for example, in a link).

- Confirm the code is working, then start the experiment.

Content Experiments have one caveat: you have to create multiple versions of the pages you want to test. These pages have to be fully functional and standalone – that is, they have to work independently of one another and look normal if accessed manually.

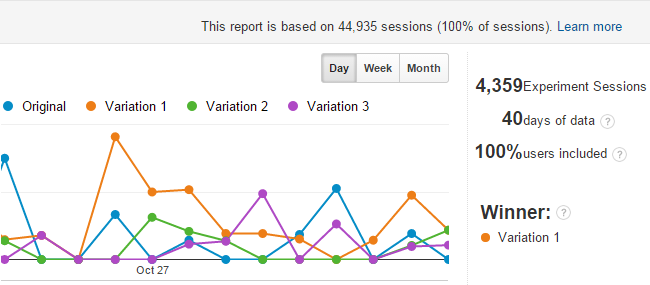

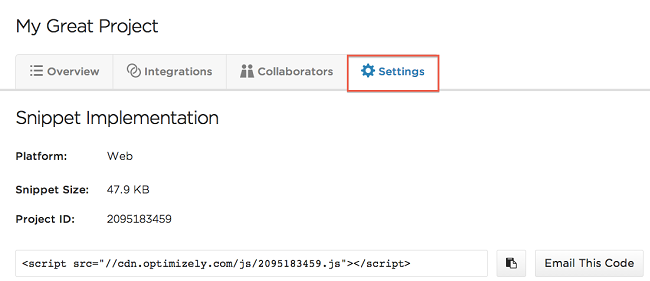

Once your experiment concludes, you can review the results in Google Analytics:

If a winner is found, it will be declared based on the number of conversions, conversion rate, and how it compares to the original version of the page. Google will also offer a probability of the test version outperforming the original in the long run, which you will want to be as close to 100% as possible.

Optimizely

Optimizely is an A/B testing platform that allows website owners to run and monitor tests with very little technical knowledge. Instead of creating multiple versions of a page, Optimizely allows you to create tests that “overlay” your control page.

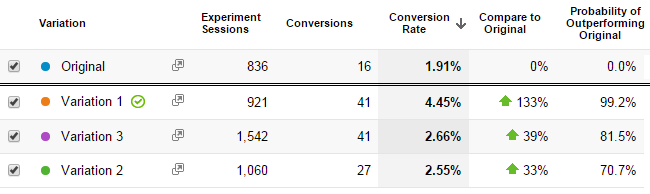

To use Optimizely, you simply need to sign up – they offer either a free plan or a custom-quoted Enterprise version – and add your custom JavaScript snippet in the head of your website. This snippet, which only needs to be implemented once, is what allows Optimizely to change the content of your pages as they load.

Once your snippet is in place, you can use Optimizely’s visual editing tool to select any page on your website and create a test. For example, you might pick a particular page and insert a promotional image, or change the color of an “add to cart” button. You can then access Optimizely once the test has run its course to see the results.

If you have a winning test, Optimizely can’t make the changes to your site for you – you’ll need to do that yourself. However, using this tool will give you the confidence to implement even major changes permanently.

VWO

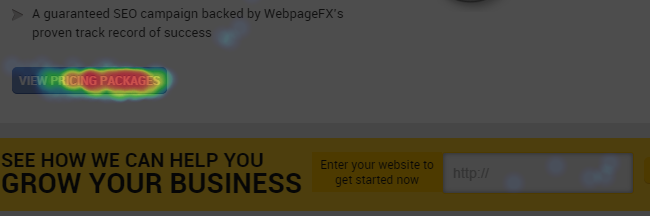

Much like Optimizely, VWO only requires you to insert a line of code into your website to enable A/B testing functionality. However, the advantage of this software is that it provides additional tools like heatmaps, user feedback, and personalized content tests based on location or device.

VWO is pricier than other options, but the basic plan starts at only $9 per month, which is ideal for beginners who want to run tests with less than 2,000 visitors. For larger sites, VWO costs $49 per month or $499 per year.

To set up a test with VWO, implement your “smart code” in the head of your website. Then, use the visual editor to load a page of your choice and make changes for a B version. Once the test is running, you can take advantage of additional tools like clickmaps and heatmaps to get a better handle on the results.

Remember: once you’ve determined a winner for your test, you’ll need to implement it yourself. You can leave VWO running forever if you like, but running the tests can sometimes slow load times.

Unbounce

The final tool on our list isn’t solely an A/B testing tool, but it does have some A/B test options, so it’s worth including!

Unbounce is a “what you see is what you get,” or “WYSIWYG,” landing page creator that allows you to create dedicated landing pages for products, services, promotions, or any other purpose. You can build pages in a matter of minutes, then simply duplicate them to create different versions that run simultaneously.

The landing pages you create with Unbounce are great-looking and easy to build. You don’t have to know HTML to create or update a page, or even to run an A/B test. And testing is built right in, so you can see the results of any given experiment simply by visiting the dashboard.

Unbounce starts at $49 per month for 5,000 unique visitors. However, since this tool has applications outside of A/B testing – that is, you can build an unlimited number of landing pages for any campaign or promotion of your choice – it may be worth the cost for your business.

How to run a test

The process for setting up an A/B test will vary based on the software you’ve chosen. However, there are certain steps you should follow regardless of the platform you use, which we’ll outline here.

Decide what you want to test

Try to narrow your focus down as much as possible for your test. While it is possible to test multiple elements on a page at once, this will keep you from pinpointing exactly which change contributed to a fluctuation in conversion rates.

Let’s say you think your “start now” button isn’t converting well. You may decide to test the current version against a version that is a different color. That’s your test: button colors. Avoid complicating things by changing the button’s text or location at the same time, because even if the new version works, you won’t know why.

Choose the variation(s)

What do you want to change in your test version? In the example above, it’s the color of a button. If your current button is purple, but you want to try green, the green button will be your variation.

If you’re only testing a small factor like button color, you can always add a third variation (or more!). For example, you could test your current purple against both green and blue to see if color can make a difference in conversions.

One thing to keep in mind: the more variations you create, the more site visitors you’ll need to achieve statistical significance (more on that below).

Start your test

Use your tool of choice to create your test. Double-check that it works in a few different browsers to ensure that it’s running properly – and that visitors can see it – before you leave it alone for a while.

Wait

Waiting is the hardest part of an A/B test. No one wants to sit around and wait for data to be collected, but for most tests, you’ll have to do that for at least two weeks.

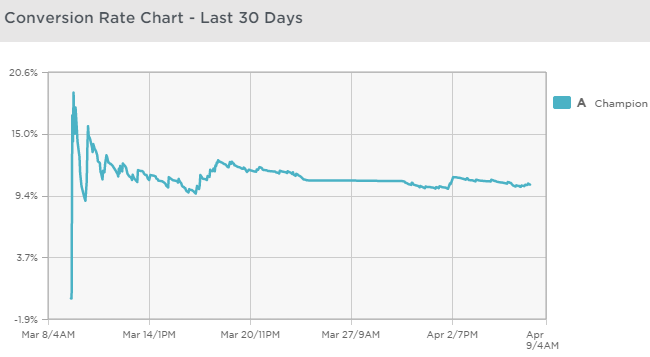

If you have an enormous number of site visitors, you might not have to wait that long, especially if your test version starts outperforming the control right out of the gate. But it’s still best to give the test time to “level out,” just in case there are coincidences or other factors at play.

Look for statistical significance

As you prepare to end your A/B test, look for results that contain some kind of statistical significance – that is, results that matter.

If your A version of a page converted at 2.17% while the B version converted at 2.19%, this is probably not really significant. That 0.02% might only represent a few cents of revenue, or a single lead among thousands.

On the other hand, if your A version converted at 2.17% and the B version converted at 4.17%, this probably is significant. Depending on the amount of traffic or leads, this 2% difference could represent hundreds of dollars, and maybe even more in the long-term.

Some tests will show better results if you wait them out just a little longer. You can try out VWO’s duration calculator to get a good idea of how long you should plan on letting your test run.

Reviewing the results

Once enough time has passed, you’ll be ready to evaluate the results of your A/B test.

Here are a few things you should look for when reviewing your results.

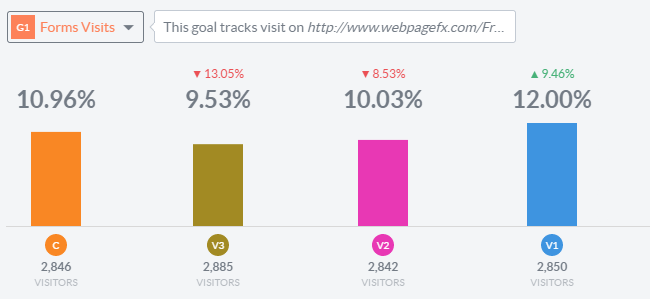

A clear preference

We mentioned statistical significance earlier, and that idea bears repeating. When you’re looking over your test results, you should be trying to find data that shows a clear preference by your visitors, or very visible results.

Don’t make the mistake of looking at percentages alone. A 33% increase in conversion rate may sound pretty impressive, but if your sample size is so small that this only represents two additional leads, it’s really not compelling evidence.

Notable sample sizes

When you end an A/B test, it should be because you’ve accumulated a fairly high number of visitors who have been exposed to each variation.

Depending on the size of your site, you may find that 1,000 visitors is a good sample size, and demonstrates a clear preference. But if you have a larger site with many variations, you may have to wait until you hit 100,000. The exact number isn’t all that important, but a test with small sample sizes may give you misleading results.

It’s usually recommended that you wait at least a month before drawing any conclusions from an A/B test. So if you know what your average monthly traffic is, you can use this to come up with a rough estimation of how many visitors you’ll need before ending the test.

Possible trends

Try to be aware of any trends or broader conclusions that you may be able to draw from your test, especially if it isn’t your first one[R1]. Even one test on a single form or button may teach you lessons about your visitors and how they interact with your site.

For example, you may find that shortening the length of a landing page boosted conversions significantly. Could it be that the other pages on your site are too long as well? Maybe you aren’t adding calls to action early enough?

Actionable data

Finally, it’s up to you to ensure that the elements you’re testing will provide you with results that are actionable. If you’re testing button colors, it’s easy enough to say “this variation won, so let’s make all of our buttons green!” But if you choose to test multiple elements at once (or do so by accident), it’s not as easy to decide what actions you should be taking.

Further resources

We hope you’ve enjoyed reading this beginner’s guide to A/B testing! To wrap things up, here are a few more resources you might find useful:

- 50 Split Testing Ideas – A list of ideas you can use for your tests, many of which go beyond your website.

- A/B Test Calculator – An advanced calculator to help you determine whether or not your upcoming test will give you significant results. Pick “pre-test analysis” to try this feature. You may have to spend a little time with the tool to learn it, but it’s worth it!

- A/B Test Duration Calculator – VWO’s tool to help you determine how long you should run your test.

- How to Build a Strong A/B Testing Plan That Gets Results – Make long-term plans by following each step in this detailed guide.

- 25 Tips to Increase Your Conversion Rate – Another one of our guides, this offers you 25 tips – and potential A/B testing ideas – to boost your website’s conversion rate.

- A Beginner’s Guide to A/B Testing Email – Ready to go beyond your website? Check out this guide to learn how to start A/B testing your email marketing, too

Related Resources

- 9 Value Proposition Examples to Convince and Convert Customers

- Answering 7 Common Conversion Rate Optimization Questions

- Are You Asking the Right Questions? Your Conversion Rate Could Be Suffering

- Average Facebook Ad Conversion Rates for You and Your Industry

- Benefits of Conversion Rate Optimization

- Best CRO Companies Ranked

- Best CRO Tools: 15 Top Conversion Rate Optimization Tools

- Boost Your Conversion Rate by Following These 12 Conversion Rate Optimization (CRO) Trends

- Case Studies: the Potential Behind Conversion Rate Optimization

- How Can You Optimize Your Contact Form Conversion Rate? Try These 8 Tactics

Marketing Tips for Niche Industries

- 9 Veterinary Marketing Ideas That Work

- An Introduction to Digital Marketing for Speech Therapists

- Augmented Reality in Manufacturing

- Automotive & Transportation

- Best Manufacturing Marketing Agencies

- Best Outreach Strategies for Apartment Managers

- Catering Marketing: 7 Must-Try Catering Marketing Ideas

- Childcare Marketing: 3 Best Childcare Marketing Ideas

- Construction Marketing Agency

- CRM for Insurance Agents